If you've been following the rapid rise of AI video generation, you've probably noticed how quickly new models are entering the conversation. Some arrive with polished demos and clear backing. Others show up more quietly—yet still manage to spark curiosity.

That's exactly what's happening with Happy Horse 1.0.

At the same time, Seedance 2.0, developed within ByteDance's AI ecosystem, continues to expand its reach with a more structured, platform-driven approach to video generation.

So how do these two actually compare—and more importantly, what do we really know about them today?

Happy Horse 1.0: The Open-Source Challenger

What is Happy Horse 1.0?

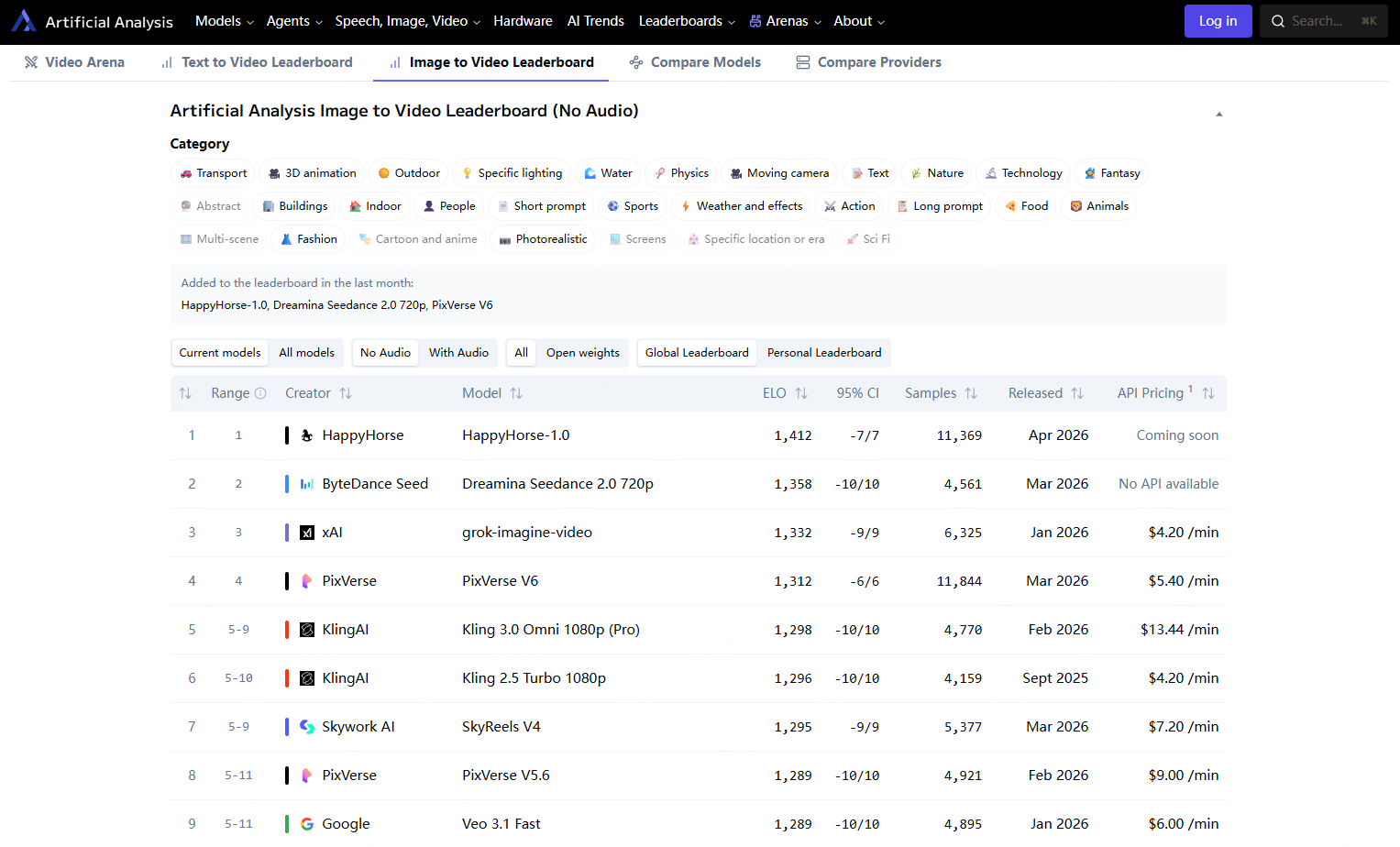

Happy Horse 1.0 is described as a large-scale AI video generator that began gaining attention in early 2026. It has been turning heads by climbing to the top of the Artificial Analysis Video Arena rankings. Much of the available information comes from project-level materials and community discussions, rather than formal publications or widely recognized technical documentation.

Based on those sources, the model is positioned as an open-source alternative in a space that's otherwise dominated by closed, API-based systems.

That positioning alone is enough to draw interest—especially among developers and smaller teams looking for more control over their workflows.

Key Features & Technical Specs

Publicly available descriptions suggest that Happy Horse 1.0 focuses on combining video and audio generation into a single pipeline.

Some commonly cited capabilities include:

📹 High-resolution video output (often described as up to 1080p).

🔊 Integrated audio-visual generation.

🧋 Multi-language lip synchronization.

⚡ Relatively fast generation speeds on high-end hardware.

That said, it's important to note that most of these specifications originate from project descriptions and have not yet been broadly validated by independent third-party benchmarks.

In practical terms, this means the model looks promising—but still lacks the kind of transparent testing data seen with more established systems.

The Open-Source Advantage

This is where things get interesting for developers and businesses alike.

If fully realized, this could mean:

🧡 The base model AND distilled model.

🧡 Super-resolution module.

🔍 Full inference code.

💻 Commercial usage rights—completely free.

No licensing fees. No subscription. You can inspect the code, modify it, deploy it on your own servers, and use anything you create commercially.

For indie developers, small studios, or anyone tired of being locked into expensive proprietary platforms, this is a pretty big deal.

Current Availability

Currently, several websites claim to be the Happy Horse 1.0 homepage, but the development team has remained pseudonymous confirming which one is legitimate. No recognized organization has stepped forward to claim ownership of this product. Readers are advised to wait for announcements before placing trust in any particular site.

Being an open source AI video generator, Happy Horse 1.0 is set to release its code and model weights through popular developer platforms—expect to find them on GitHub and Hugging Face as the project moves forward.

Seedance 2.0: ByteDance's Multimodal Approach

ByteDance AI Video Strategy

Seedance 2.0 AI Video Generator is ByteDance's latest evolution in AI video generation. If the name sounds familiar, it's because Seedance has been part of ByteDance's broader "Seed" AI initiative for a while now.

What's different with Seedance 2.0? ByteDance has doubled down on ecosystem integration. This isn't just a standalone video tool—it's designed to slot seamlessly into their content creation and distribution empire, which includes CapCut, Douyin, and TikTok.

Their strategy is all about reducing friction: generate, edit, and publish without leaving the ByteDance ecosystem.

Recently, Seedance 2.0 has started expanding access through authorized global partners, signaling a gradual shift beyond its previously limited regional rollout.

Core Capabilities

Seedance 2.0 brings some serious capabilities to the table:

1️⃣ Multimodal inputs: Text, images, videos, and audio can all serve as generation references.

2️⃣ Director-level controls: Fine-grained control over camera movements, lighting, and scene composition.

3️⃣ Audio-visual joint generation: Native synchronized audio and video output.

4️⃣ Extended duration: Clips up to 20 seconds.

5️⃣ Cinema-grade output: Professional color grading suitable for broadcast.

They evaluate performance using their proprietary SeedVideoBench-2.0, measuring everything from visual quality to temporal consistency.

Integration Ecosystem

Here's where Seedance 2.0 has a real advantage if you're already in the ByteDance ecosystem:

1️⃣ CapCut integration: Access directly within the popular video editor.

2️⃣ Dreamina: Dedicated web interface for AI video creation.

3️⃣ API access: Developer-friendly endpoints through ByteDance Cloud.

4️⃣ Cross-platform publishing: Direct export to Douyin and TikTok.

If your workflow centers around TikTok or Douyin, this tight integration can save serious time.

Current Availability

Seedance 2.0 lives within ByteDance's product ecosystem. The model powers several platforms, including Dreamina (which integrates with CapCut AI) and Jimeng. Access availability depends on your region, account standing, and how far the current rollout has reached in your market.

This is a proprietary video generation system—no downloads, no self-hosting options. Instead, ByteDance offers Seedance 2.0 as a managed cloud-based service, restricting access to channels and approved integrations.

Where They Stand: Comparing the Known

| Feature | Happy Horse 1.0 | Seedance 2.0 |

|---|---|---|

| Developer | Unclear / project-based | ByteDance Seed |

| Model Access | Described as open-source (verification limited) | Proprietary, platform dependent |

| Input Types | Text, Image (reported) | Text, Image, Audio, Video |

| Audio-Visual Generation | Yes (reported) | Yes |

| Output Quality | Up to 1080p (claimed) | NUp to ~2K (platform-dependent) |

| Narrative Control | Limited public detail | Stronger multi-shot consistency |

| Commercial Use | Not fully defined | Platform license |

This comparison reflects publicly available information, rather than fully verified internal benchmarks. As both models continue to evolve, these distinctions may become clearer.

What This Means for Creators Today

Open From the Start (Happy Horse)

For developers and studios that want freedom, customization, or integration into their own pipelines, Happy Horse is a standout choice. Its greater flexibility, potential customization, and fewer platform restrictions make it ideal for experimentation, academic use, or custom applications (assuming full release materializes as described).

Storytelling & Narrative Control (Seedance)

If your goal is multi-scene storytelling—multiple shots, character consistency, audio cohesion—then Seedance 2.0's multimodal approach holds real promise. Generators that accept reference files alongside text are easier to control once you learn the workflow.

Looking Ahead – Who Will Lead the AI Video Revolution?

By 2026, we're seeing two separate trends emerge:

- Open-source models like Happy Horse 1.0 lowering the barrier to entry and empowering research and custom product builds.

- Proprietary systems like Seedance 2.0 focusing on polished outputs and multimodal storytelling for mainstream creators and brands.

Expect the lines between ease of use and quality to blur as both communities evolve. Users should pay attention to support ecosystems, API access, and licensing progression—because how you use these models could matter as much as what they generate.

Final Thoughts

Based on what we can verify, both models represent interesting directions in the 2026 AI video space—but they cater to different priorities. Happy Horse 1.0 emphasizes openness and potential. Seedance 2.0 focuses on structure and control.

Rather than focusing on a definitive winner, it is more practical to look at how each model fits specific use cases—and where the current gaps in transparency or availability may affect real-world adoption.

In a space that is still evolving at this pace, a clear understanding of what is confirmed, what is inferred, and what is still uncertain tends to be more useful than broad claims about performance.